High Bandwidth Memory (HBM): Why GPUs Need It for Machine Learning

September 18, 2025

Introduction

High Bandwidth Memory (HBM) is the primary memory technology used in modern data center GPUs, including NVIDIA’s A100 and H100, as well as AMD’s MI250. Unlike traditional DDR or GDDR memory, HBM is physically stacked right next to the GPU die and connected through a silicon interposer. This design delivers both high capacity and extremely high bandwidth — key requirements for training today’s large-scale machine learning models.

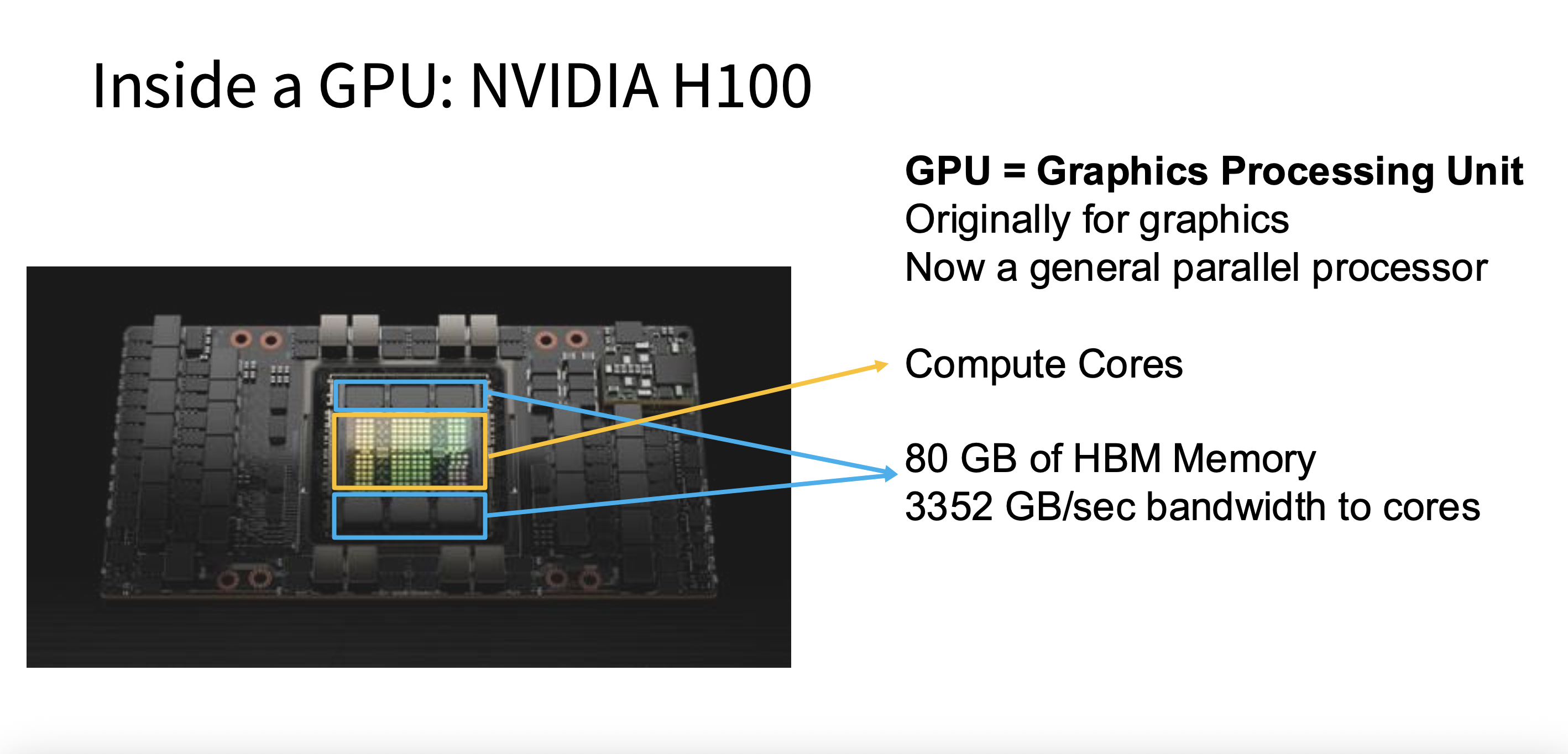

Figure 1: Components of the NVIDIA H100 GPU, including the GPU die, compute cores, as well as the HBM.

Why HBM Matters for Machine Learning

Training deep neural networks involves moving massive amounts of data: the model’s weights (its parameters), activations (intermediate results produced during forward passes), and gradients along with optimizer states (used during backpropagation). If the memory system can’t supply this data quickly enough, the GPU’s compute units stall and much of the available FLOPs go to waste. HBM ensures data flows in at terabyte-per-second speeds — 3.352 TB/s in the H100 as shown in Figure 1 — keeping CUDA and tensor cores busy and enabling efficient scaling to very large models.

Technical Background: How HBM Works

HBM is built from multiple DRAM dies stacked vertically on top of each other, which is where the "stacked" in its name comes from. This stack sits physically adjacent to the GPU die and connects to it through a silicon interposer — a layer of silicon with thousands of fine-pitch wires that enable incredibly dense communication. Rather than achieving speed through high clock frequencies, HBM uses an extremely wide memory interface (thousands of bits wide) that transfers large amounts of data each cycle. This approach delivers both high bandwidth and good power efficiency. Each HBM stack holds a few gigabytes, and modern GPUs integrate multiple stacks to reach total capacities like the 80 GB found on the NVIDIA H100.

Performance Metrics

When looking at GPU specifications, two numbers describe HBM performance. Capacity tells you how much model data can fit directly on the GPU — larger capacity means you can fit bigger models or larger batch sizes without having to offload data to slower CPU memory or split across multiple GPUs. Bandwidth tells you how quickly data can move between HBM and the compute cores. The NVIDIA H100, for example, provides 3,350 GB/s of bandwidth, compared to roughly 100 GB/s for a high-end CPU with DDR5. That's about a 30x difference, and it's the main reason GPUs can keep their tensor cores fed.

Real-World Implications for ML

More HBM capacity directly enables larger models and batch sizes to fit on a single GPU, reducing the need for complex multi-GPU setups just to handle your data. Higher bandwidth keeps the matrix multiplication units busy instead of idle, which translates directly to faster training. And because HBM consumes less energy per bit transferred compared to DDR or GDDR, it helps data centers stay within their power budgets while still delivering the throughput that modern training demands.

Key Takeaways

To put it all together: HBM is stacked memory that sits right next to the GPU die and connects through a silicon interposer, delivering terabytes per second of bandwidth — far beyond what DDR or GDDR can offer. For machine learning workloads, both capacity and bandwidth matter: capacity determines what you can fit on the GPU, and bandwidth determines how fast computation can actually proceed. Modern data center GPUs like the A100, H100, and AMD MI250 all rely on HBM to make large-scale training practical.